The FDA last week updated its list of cleared AI-enabled medical devices, with the new list showing AI marketing authorizations through the end of 2025. The updated list reveals that radiology is maintaining its lead as the medical specialty with the most clearances.

The FDA’s previous update featured data through the end of September 2025, and showed the number of AI-enabled medical devices for radiology crossed the 1k mark. The new numbers show continued momentum for medical imaging.

- The agency’s data go all the way back to 1995 (the first cleared radiology device on the list was ImageChecker from R2 Technology/Hologic in 1998).

The new list tracks authorizations through the end of December 2025, and indicates the agency has…

- Authorized 1,451 AI-enabled medical devices since it began keeping track in 1995.

- Approved 1,104 radiology devices, or 76% of total AI-enabled medical authorizations.

- In the fourth quarter of 2025, the FDA cleared 72 AI-enabled medical devices, of which 55 (76%) were radiology devices.

- For all of 2025, radiology secured 75% of authorizations, compared to 73% for all of 2024 and 80% for 2023.

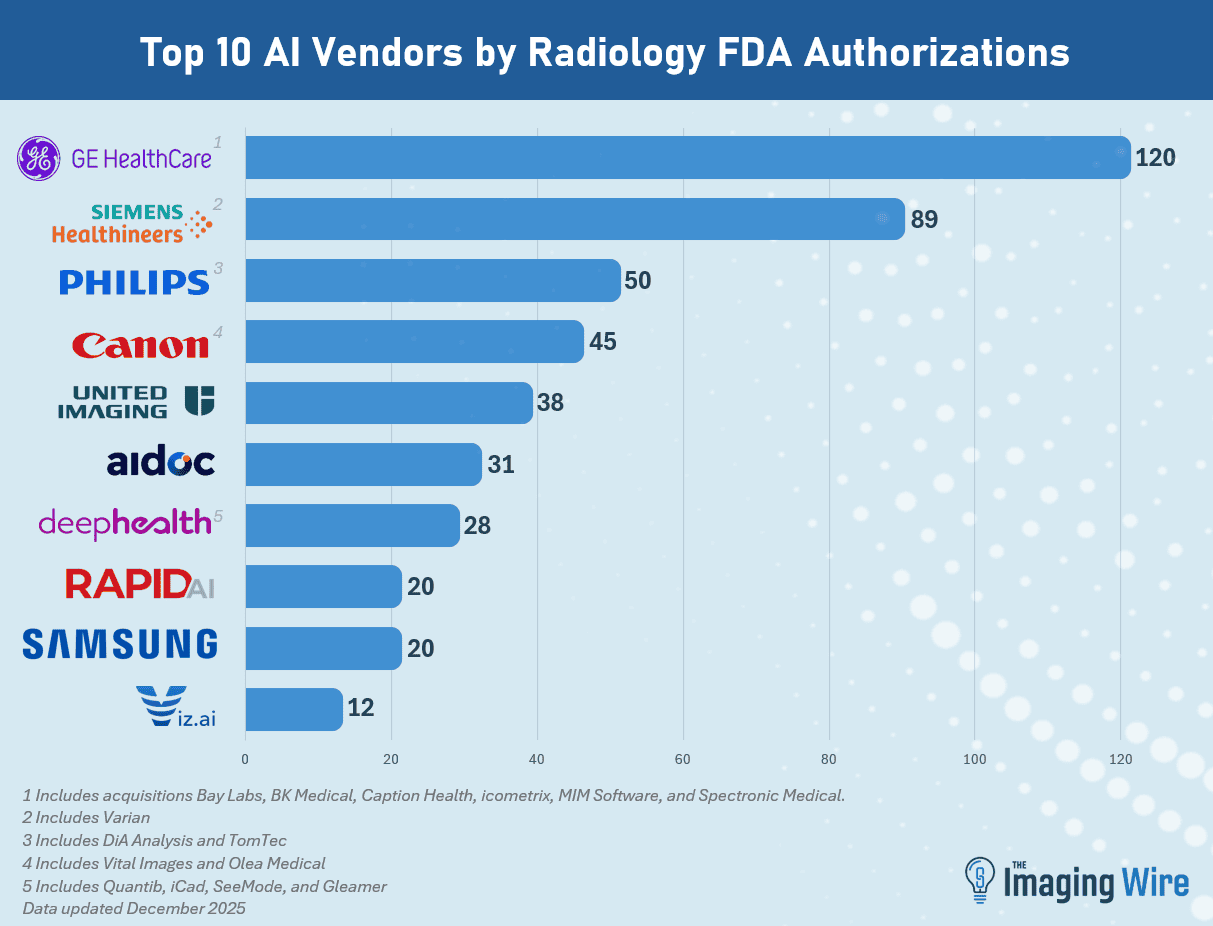

- GE HealthCare retained the top spot as the company with the most radiology AI authorizations at 120 (including acquisitions Bay Labs, BK Medical, Caption Health, MIM Software, icometrix, and Spectronic Medical).

- Next is Siemens Healthineers at 89 (including Varian), then Philips at 50 (including DiA Analysis and TomTec), Canon at 45 (including Vital Images and Olea), United Imaging at 38, Aidoc at 31, and DeepHealth at 28 (including Quibim and iCAD).

As we’ve noted in the past, the FDA’s list includes not only standalone software applications, but also imaging hardware with embedded AI applications, such as a mobile X-ray system with AI algorithms for detecting emergent conditions.

The Takeaway

The new FDA list shows radiology’s continued dominance when it comes to AI-enabled medical device technology. But an interesting subtext is the ongoing consolidation in the radiology AI space, which could mean that some firms may be climbing the list quickly.