Not all AI is created equal when it comes to analyzing chest X-rays. A new study in Radiology found wide variation in performance for seven commercially available chest X-ray algorithms to detect lung cancer.

X-ray is by far the most widely used imaging modality. Radiography is often the first imaging exam a patient receives, and it frequently serves as a gateway to other more advanced imaging modalities.

- But radiography also has well-known shortcomings (which is why advanced imaging is needed for follow-up). Could AI help unlock X-ray’s value and make it more useful?

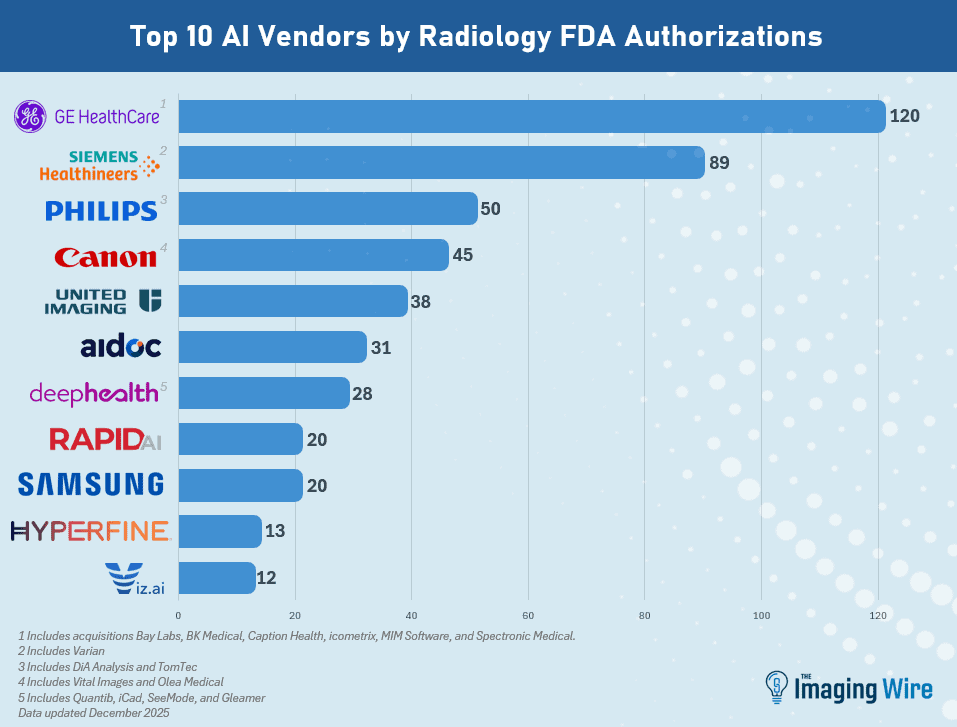

That’s what a host of AI algorithm developers are banking on, but the wide variety of solutions can create confusion for clinicians.

- So U.K. researchers decided to hold an AI bake-off, comparing commercially available algorithms from seven developers for detecting lung cancer on chest X-rays.

The competing companies included Annalise/Harrison.ai, Gleamer, Infervision, Milvue, Oxipit, Qure.ai, and Rayscape. Researchers anonymized performance results from the different products.

In all, chest radiographs from a dataset of 5.2k patients with a real-world lung cancer prevalence rate were included, with researchers finding…

- Significant variance in algorithm performance by each of the major accuracy measures: sensitivity (21%-78%), specificity (59%-98%), and positive predictive value (1.5%-28%).

- All the algorithms increased the number of false positives, and with significant variation. One model generated only 10 more false positives than radiologists, while another produced – wait for it – over 2k.

- If used to triage patients for follow-up CT exams, one model would generate $1.6k in additional costs while another would produce $327k.

What accounts for the variation? An underlying factor is most likely differences in the datasets used for model training.

- In any event, the study underscores the need for more head-to-head comparisons to determine the strengths and weaknesses of individual AI algorithms.

The Takeaway

This week’s study on how AI performance varies between commercially available algorithms initially seems disturbing and might suggest a need for stronger regulatory oversight. But AI’s diversity could be its strength in a future where every patient case is analyzed by multiple different algorithms, each with its own advantages. This could ultimately produce a more complete picture of the patient than any one algorithm on its own.